93.3% on LoCoMo-Plus: How Kumiho's Graph-Native Memory Doubles the Best AI Can Do

What happens when you ask an AI about something you told it nine months ago — in completely different words?

That's the challenge behind LoCoMo-Plus, a new benchmark from Xi'an Jiaotong University (arXiv 2602.10715) that tests whether AI memory systems can connect the dots across long conversations when there's no obvious link between "what was said" and "what's being asked." Think of it as testing whether your AI assistant truly understands your conversations — or just pattern-matches on keywords.

The best result anyone had published? 45.7% from Gemini 2.5 Pro — Google's flagship model with over a million tokens of context.

Kumiho Cognitive Memory scores 93.3%.

That's not a typo. Let's break down how.

The Problem: AI Memory Is Broken

Today's AI assistants have a memory problem. They can remember what you said five minutes ago (context window), but ask them about a conversation from three months back? You'll get a blank stare or, worse, a confident fabrication.

Existing approaches fall into two camps:

-

Bigger context windows — Stuff everything in and hope the model finds it. Gemini 2.5 Pro can hold 1M+ tokens. It still only gets 45.7%.

-

RAG-based memory — Vector search over past conversations. Better than nothing, but when the words don't match between what you said and what you're asking, it falls apart. Plain RAG gets 24-30%.

The fundamental issue: human conversations are full of implicit connections. When you mention being careful about desserts, the AI needs to recall that six months ago you decided to quit sugar — even though "desserts" and "quit sugar" share zero words in common. This is what LoCoMo-Plus measures.

The Results

LoCoMo-Plus: Implicit Cognitive Memory

401 test entries. Four constraint types (causal, state, goal, value). Time gaps from two weeks to over a year.

| System | Accuracy |

|---|---|

| RAG (text-embedding-large) | 29.8% |

| Mem0 | 41.4% |

| A-Mem | 42.4% |

| GPT-4o (full context) | 41.9% |

| Gemini 2.5 Pro (1M context) | 45.7% |

| Kumiho Cognitive Memory | 93.3% |

The gap is 47.6 percentage points over the previous best. Kumiho doesn't just win — it more than doubles every other system.

And our recall accuracy? 98.5%. Out of 401 entries, Kumiho retrieves the right memory 395 times. The remaining 27 errors? 78% of them are the answer model ignoring context it successfully retrieved — not a memory failure.

LoCoMo: Standard Conversational QA

To make sure this wasn't a one-trick result, we also ran the original LoCoMo benchmark — 1,986 factual questions across five categories.

| System | Overall F1 |

|---|---|

| OpenAI Memory | ~0.343 |

| Zep | — |

| Mem0 | ~0.40 |

| Mem0-Graph | ~0.40 |

| Kumiho | 0.565 |

16.5 points above Mem0 on the official token-level F1 metric. Our adversarial score (correctly refusing to fabricate answers) hits 97.5% — critical for any production system where trust matters.

How It Works: Three Innovations

Kumiho doesn't use a bigger model. It doesn't use a longer context window. It uses a fundamentally different approach to organizing memory.

1. Prospective Indexing — Remembering What Things Mean for the Future

This is the core insight. When Kumiho stores a conversation, it doesn't just summarize what happened. It asks: "When would this memory be relevant again?"

Example:

You tell the AI: "My lactose intolerance got so bad that even a splash of milk put me in bed all day."

Traditional systems store a summary about lactose intolerance. Kumiho also generates forward-looking implications:

"Months later, Caroline carefully reads restaurant menus before agreeing to dinner dates, prioritizing her dietary restrictions over social convenience."

Three months later, you say: "I finally said yes to a dinner date, but I picked the place solely because I know I won't end up doubled over afterward."

The words "dinner date" and "picked the place" have zero overlap with "lactose intolerance" and "splash of milk." But the prospective implication bridges the gap. The AI connects the dots because it anticipated this kind of future relevance at write time.

This is how human memory works. We don't just store facts — we encode what they mean for our future selves.

2. Event Extraction — Preserving What Summaries Lose

When conversations are summarized, specific details get compressed away. "Her phone battery died during an important call and she couldn't reach anyone for two hours" becomes "discussed technology challenges."

Kumiho extracts structured events with consequences during consolidation:

Key events:

- Phone battery died during important call → Reconsidered digital dependency

- Joanna decided to quit sugar → Improved energy levels

These granular causal chains survive summarization and provide the factual anchors that triggers reference later.

3. Client-Side LLM Reranking — Your Agent IS the Reranker

When the memory system returns stacked conversation history about a topic, someone needs to pick the most relevant piece. Instead of running a dedicated (and expensive) reranking model, Kumiho asks the AI agent that's already talking to you to evaluate what's most relevant.

The agent already knows the full context of what you're discussing. It's a better reranker than any standalone model — and it costs nothing extra because the agent is already running.

Context Windows Are Not the Answer

Perhaps the most striking finding: Gemini 2.5 Pro with a 1M+ token context window gets 45.7%. It can literally fit the entire conversation history without any summarization. And it still fails more than half the time.

Why? Because finding a needle in a haystack doesn't work when the needle looks nothing like what you're searching for. Implicit cognitive connections require understanding and organization — not just capacity.

Kumiho achieves 93.3% with GPT-4o-mini handling most operations. That's one of the cheapest models available. The lesson: cognitive memory is an organization problem, not a scale problem.

The Numbers in Detail

Performance by Constraint Type

| Type | Accuracy | What it tests |

|---|---|---|

| Causal | 96.0% | "X happened → Y changed" |

| State | 96.0% | "Their situation is now Z" |

| Value | 96.0% | "They believe/prefer X" |

| Goal | 85.0% | "They're working toward X" |

Goal constraints are the hardest — connecting "can't believe they charge that much for a car key" to "saving for an engagement ring" requires abstract reasoning about money management. Even here, Kumiho nearly doubles the previous best.

No Time Cliff

Memory systems typically degrade sharply over time. Kumiho doesn't:

| Time Gap | Accuracy |

|---|---|

| ≤ 2 weeks | 88.6% |

| 2 weeks – 1 month | 97.4% |

| 1 – 3 months | 93.9% |

| 3 – 6 months | 93.5% |

| > 6 months | 84.4% |

Before prospective indexing, our >6 month accuracy was 43.8%. Now it's 84.4% — an improvement of 40.6 percentage points at the hardest time horizon.

Cost

The entire 401-entry benchmark costs ~$14 using GPT-4o-mini for pipeline operations and GPT-4o for answer generation. For comparison, running the same benchmark on Gemini 2.5 Pro's full-context approach would cost significantly more — for less than half the accuracy.

| Component | Cost |

|---|---|

| Memory consolidation (10,000+ sessions) | ~$3 |

| Event extraction | ~$1 |

| Prospective indexing | ~$1 |

| Query reformulation | ~$0.50 |

| Graph edge discovery | ~$2 |

| Answer generation (GPT-4o) | ~$3 |

| Judging | ~$0.50 |

| Total | ~$14 |

Model-Decoupled Architecture

The memory system works with any LLM. The same pipeline, zero changes:

| Answer Model | Accuracy | Cost per entry |

|---|---|---|

| GPT-4o-mini | ~88% | ~$0.003 |

| GPT-4o | 93.3% | ~$0.02 |

Recall accuracy stays at 98.5% regardless of which model answers. As LLMs improve, accuracy goes up automatically.

Reproducing the Results

The benchmark suite is open. Run it yourself:

git clone --recurse-submodules https://github.com/kumihoclouds/kumiho-benchmarks.git

cd kumiho-benchmarks

pip install -r kumiho_eval/requirements.txt

# LoCoMo-Plus (401 entries)

python -m kumiho_eval.locomo_plus_eval \

--concurrency 16 \

--entry-concurrency 4 \

--graph-augmented \

--recall-mode summarized \

--answer-model gpt-4o \

--project benchmark-locomo-plus

# LoCoMo (1,986 questions)

python -m kumiho_eval.locomo_eval \

--recall-mode summarized \

--recall-limit 3 \

--context-top-k 7 \

--no-judge \

--answer-model gpt-4o \

--project benchmark-locomo

What This Means

The AI memory problem isn't about context windows or bigger models. It's about how you organize what you know.

Kumiho's graph-native architecture — with prospective indexing, event extraction, and structured consolidation — proves that a well-designed memory system using cheap models can outperform the most powerful AI in the world running on brute force.

Your AI assistant should remember not just what you said, but what it means. That's what cognitive memory is.

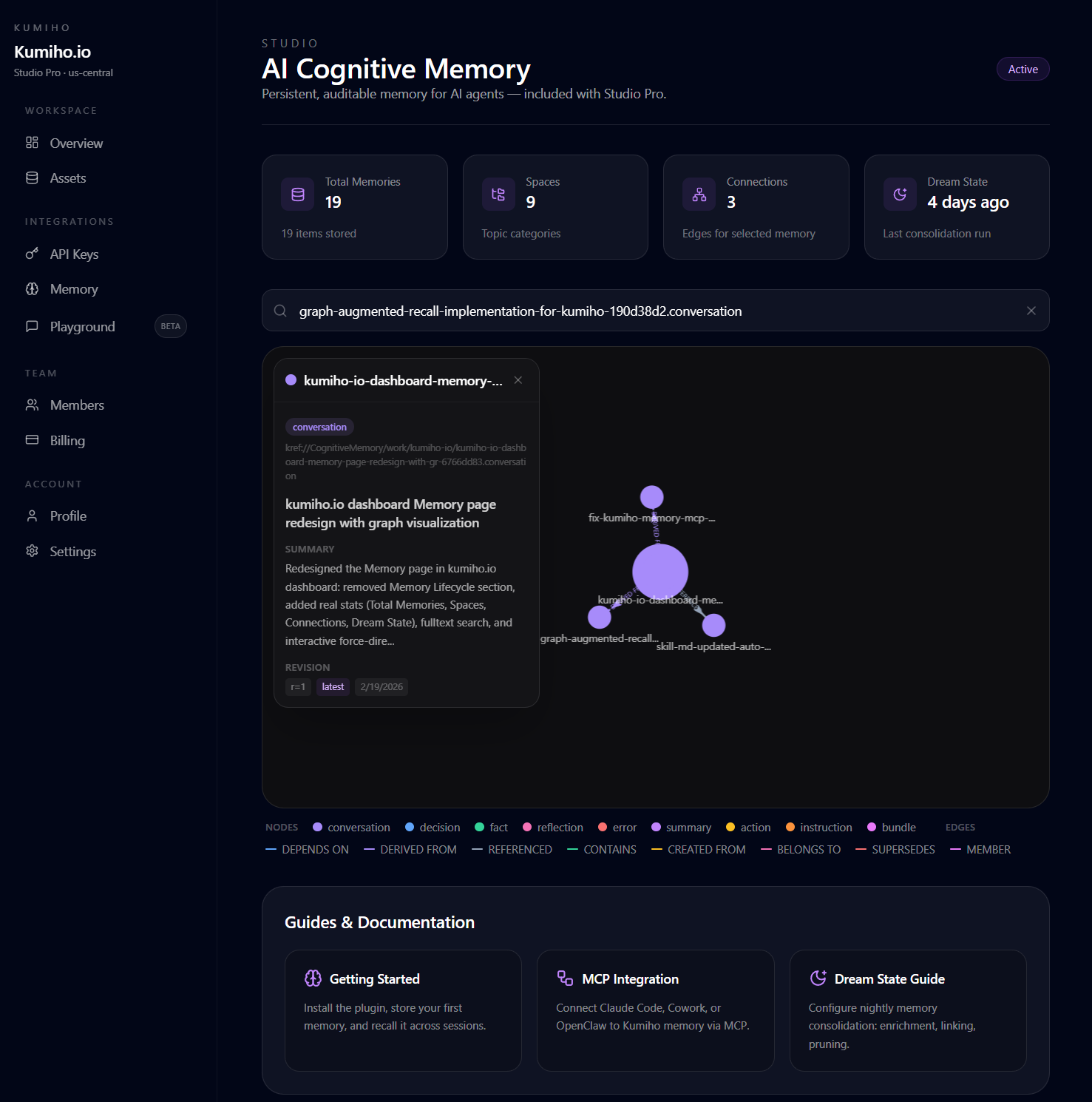

Kumiho Cognitive Memory is available now. Visit kumiho.io to get started, or explore the documentation to learn more about the architecture.

The full benchmark methodology, per-entry results, and reproduction scripts are available in the kumiho-benchmarks repository. Clone it and run the benchmarks yourself — everything is reproducible.